What is Azure Data Factory? Why should you use it or learn about it? What are the advantages and disadvantages of using Azure Data Factory?

Keep reading to find out the basics of Azure Data Factory.

Table of Contents

What is Azure Data Factory?

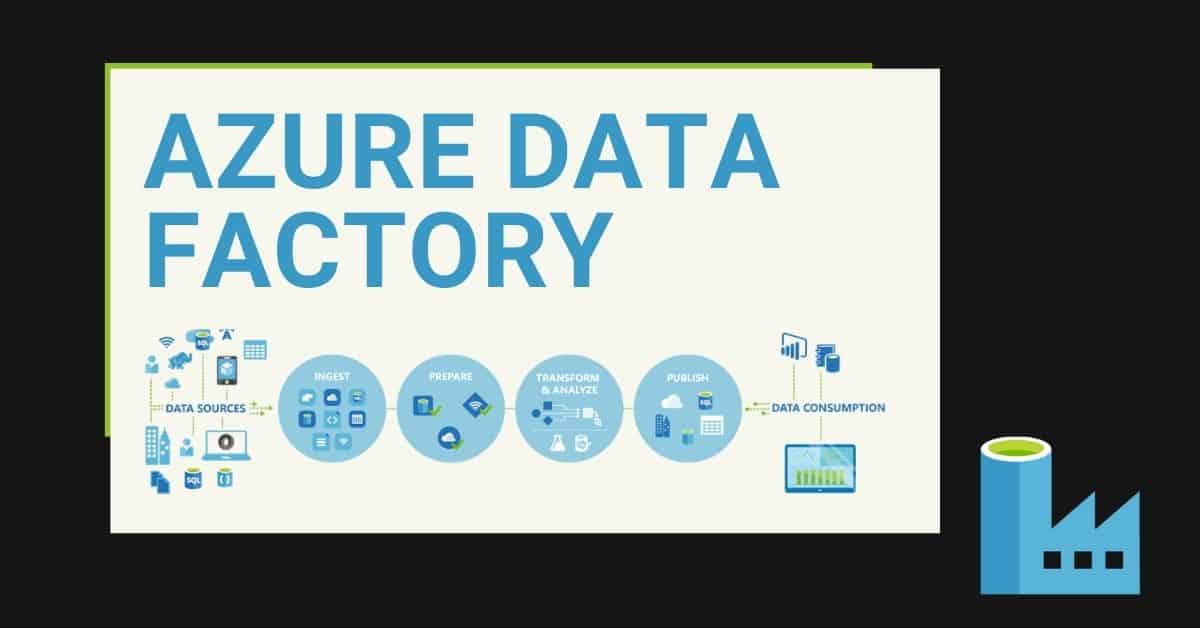

To begin, Azure Data Factory (ADF) helps you design your data movement solutions for on-premises or any cloud provider. It is a serverless offering that allows you to perform Enterprise data movements and transformations while governing Azure Data Factory using the Azure ecosystem.

The evolution of ADF as a tool and adoption of it during the past few years has been amazing. In only a few years, the Product Team has been able to deliver a high-quality orchestration and data movement tool.

ADF can perform Enterprise data analytics data movements. It can deal with large or small volumes of data. If you are looking at building solutions in the application integration space, I suggest having a look at Azure Logic Apps.

Key components in Azure Data Factory for building a solution

Development models in Azure Data Factory

- Only pipeline activities

- Mapping Data Flows

- Power Query

- SQL Server Integration Services in ADF (SSIS Integration Runtime)

Why should you use Azure Data Factory?

In order to answer this question, here is a list of 20 reasons why you should be using Azure Data Factory.

#1 Ability to use Azure Data Factory is a skill in high demand

Firstly, the rise of cloud technologies and need for data tools helped Azure Data Factory grow quickly.

The ability to use Azure Data Factory is already a highly demanded skill in the data analytics space, and it will continue to stay the same. With the rise of skilled users, the process for releasing new ADF features will also increase.

#2 Frequent updates and features released in Azure Data Factory

Secondly, Microsoft releases new features every month within the service. Likewise, there are monthly updates for previous features.

#3 Documentation

Documentation has greatly improved during the past few years. There are a lot of resources are available through Microsoft documentation and additional information provided by the community.

#4 Easy to get started with

Easily get started with ADF with thanks to great tutorials available in Microsoft documentation and Learning Paths. There is a large amount of community written documentation as well.

Beginners as well as experts can navigate the user interface. For example, you can build solutions using the Data Copy Wizard or by dragging and dropping components. This makes it accessible to everyone.

#5 Cost-effective service

I think that I can use the magic phrase “it depends on your requirements”, but the reality is that if you are applying best practices and you know the ins and outs of Azure Data Factory, you will achieve a cost-effective solution.

There aren’t different prices for connectors. You can use all of them for the same price.

#6 Pay-as-you-go and Scale

Additionally, there is no requirement for an additional monthly or annual license or up-front payments. You simply pay for what you use.

You can also scale up and down your data flows, SSIS nodes, Data Integration Units, etc. when your workloads require more or fewer resources.

#7 Code Version Control

Full integration with code version control Git / Github. Nobody can make up any more excuses not to use it.

#8 Continuous integration and continuous deployment (CI/CD)

Full integration with Azure DevOps for CI/CD across different environments and it is easy to setup.

#9 Monitor Alerts

Full integration with Azure Monitor Alerts and Log Analytics. There is no need to build additional alerting frameworks (check out these posts on Azure Data Factory Alerts and Azure Log Analytics).

#10 Web Portal

Design and develop from the web portal, without provisioning additional workstations or servers.

#11 Volume, Variety and Velocity

The 3 Vs for data integration are covered in Azure Data Factory. It doesn’t matter how big your datasets are, what their structure is, or how fast you need to load them. Azure Data Factory provides tools and is integrated with other Azure Services to cover any scenario.

In my opinion, ADF will be valid in the long term to cover any of your data analytics needs.

#12 Platform-as-a-Service (PaaS)

Azure manages the ADF platform and the backend. It’s part of the Platform-as-a-Service (PaaS) offering. You don’t have to manage any hardware/middleware or patching.

#13 Built-in Connectors and Azure Integration

There are more than 85 connectors and the number continues to keep growing. This includes some generic connectors like HTTP or ODBC in case you can’t find a built-in connector.

Azure Data Factory is also fully integrated with other Azure services like Azure Functions, Azure Databricks, Azure Data Explorer, Azure Data Lake and more.

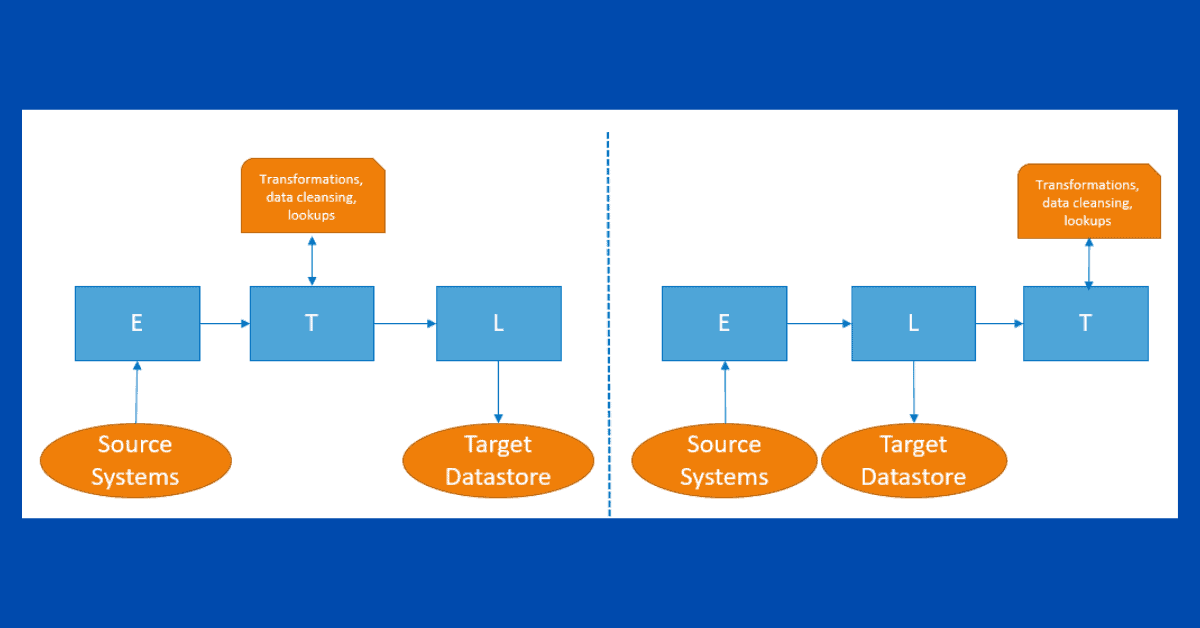

#14 ETL and ELT

You can perform ETL (Extract-Transform-Load) using data flows or ELT (Extract-Load-Transform) using only common pipeline activities.

#15 SSIS Investment

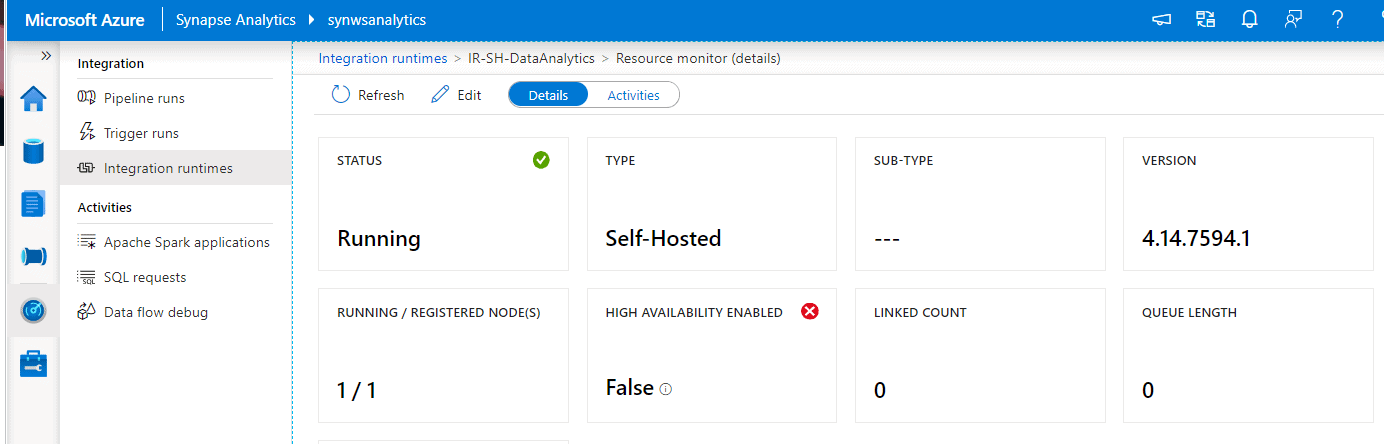

Also, if you have made a large investment in SQL Server Integration (SSIS) solutions and they work for you, you don’t need to throw away that investment. You can take advantage of ADF to execute your packages in a managed environment.

#16 Parallel execution

The execution in parallel of activities is built-in by default. So, if you are trying to load information for many data assets at the same time, you can achieve this easily without building big frameworks.

#17 Submit new features to the Product Team

As with many other Azure Products, if you are missing a feature, you can submit an idea here. If it is popular and gets voted up, it will be included in the backlog of upcoming features for ADF. It may take a while, but you are likely to get your feature if your idea is registered.

#18 PowerShell

A large set of PowerShell APIs is available for your use here. You can manage the service or develop new solutions.

#19 One-stop-shop for Analytics

Azure Data Factory is included as part of the Azure Synapse Analytics Workspace Experience. The service has been called a one-stop-shop for analytics. (Check this blog post)

#20 Security

Azure Data Factory offers different security levels and tools to guarantee security, including:

- Data encrypted in transit

- Connections are encrypted

- Role-based access control (RBAC) groups are available in Azure

- Private endpoints

- Use your VNet to host the service

- Governance through Azure Portal

Disadvantages of Azure Data Factory

Limitations in the service will eventually disappear, but some of them will stay for a longer time.

- Limitations on the number of roles to manage the platform. It’s necessary to create a few custom roles.

- Lack of advanced configuration and development options. These limitations arise only in really advanced and complex scenarios. Because solving these issues is not as popular as other features, it can take some time for the Product Team to address these changes.

- Complex pricing model. ADF is one of the most difficult services in Azure for planning an on-going cost and allocating a budget. New features have been released during the past few months to make it easier to understand pricing, but I think that it’s still difficult to understand for new users.

- Lack of unit testing and integration with other tools in the Visual Studio stack. By only using the web browser experience, you will run into limitations.

- By using the web browser, you have to make sure to create Azure policies so people cannot access it from their homes or phone using public internet.

- Expressions in data flows or pipelines take some time to understand. At the end of the day, you will always use the same ones, but it takes some time to get to used to them.

Summary

In summary, there are many advantages and a few drawbacks to using Azure Data Factory. As the service continues to grow, we will see even more changes.

Am I missing any key benefits or current key limitations? Leave a comment below.

Final Thoughts

My journey with Azure Data Factory has been amazing. I’ve had the opportunity to present on ADF at different user groups, work with ADF in many solutions for different customers, and help people get started with Azure Data Factory.

I’ve seen progress from the first version to the current service and looking forward to seeing more features in the future.

To stay up-to-date with all things Azure, please follow me on Twitter at TechTalkCorner.

2 Responses

Askeland

21 . 02 . 2021Some extra limitations:

– Max 40 activities per pipeline.

– No support for logical OR as dependency between activities. An activity for failure (e.g. scale down and send email) must be repeated for all activities in the pipeline. Remember the max 40 activities limit.

– Dependency between pipelines can be implemented using thumbeling window trigger, but each trigger can depend on a maximum of 5 others.

– Hard to read/code review autogenerated json.

David Alzamendi

27 . 02 . 2021Hi Askeland,

Thank you for reading the article and for pointing out some more limitations! I didn’t want to go into much detail in this intro blog post.

Regards,

David